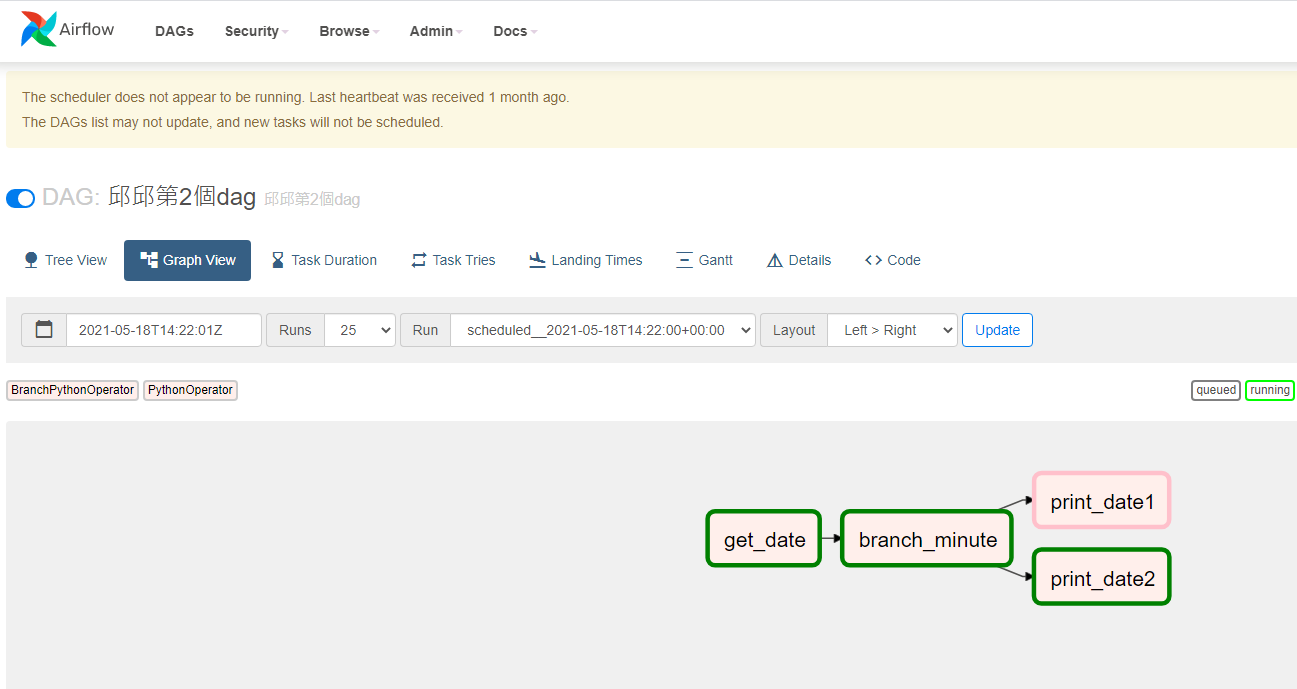

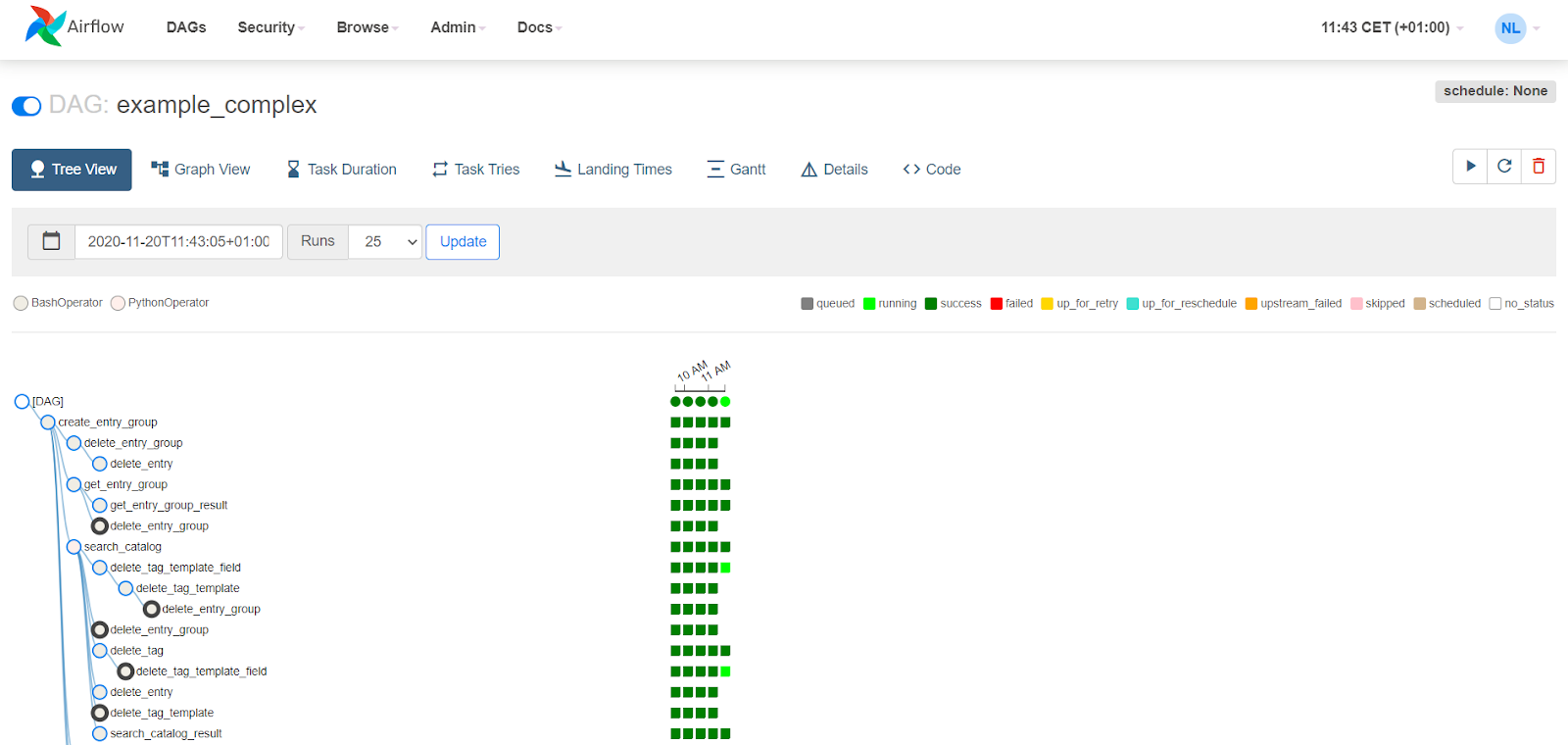

And because Airflow can connect to a variety of data sources – APIs, databases, data warehouses, and so on – it provides greater architectural flexibility. Prior to the emergence of Airflow, common workflow or job schedulers managed Hadoop jobs and generally required multiple configuration files and file system trees to create DAGs (examples include Azkaban and Apache Oozie ).īut in Airflow it could take just one Python file to create a DAG. There are also certain technical considerations even for ideal use cases. Backups and other DevOps tasks, such as submitting a Spark job and storing the resulting data on a Hadoop clusterĪs with most applications, Airflow is not a panacea, and is not appropriate for every use case.Machine learning model training, such as triggering a SageMaker job.Building ETL pipelines that extract batch data from multiple sources, and run Spark jobs or other data transformations.Handling data pipelines that change slowly (days or weeks – not hours or minutes), are related to a specific time interval, or are pre-scheduled.Automatically organizing, executing, and monitoring data flow.And you have several options for deployment, including self-service/open source or as a managed service. Airflow’s visual DAGs also provide data lineage, which facilitates debugging of data flows and aids in auditing and data governance. The Airflow UI enables you to visualize pipelines running in production monitor progress and troubleshoot issues when needed. You add tasks or dependencies programmatically, with simple parallelization that’s enabled automatically by the executor.

A scheduler executes tasks on a set of workers according to any dependencies you specify – for example, to wait for a Spark job to complete and then forward the output to a target.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed